The EU's new Cyber Resilience Act is about to tell us how to code

First a round of thanks for the many people in industry and government who provided valuable links, background and insights! I could not have done this without your help! If you spot any mistakes, or have suggestions, please do contact me on bert@hubertnet.nl

The EU’s new Cyber Resilience Act is admirable in its goal. And the EU is not alone in thinking something needs to be done about the dreadful state of security online – the Biden administration has just released its National Cybersecurity Strategy that has similar aims.

March 18 UPDATE: There is now a second post on the practicalities of the EU CRA, and things that really appear to be wrong there.

tl;dr

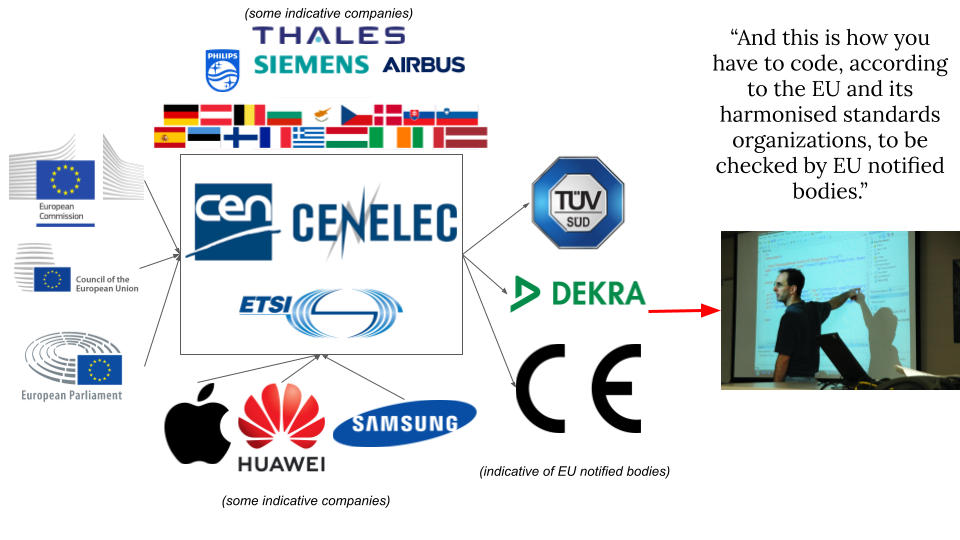

The extremely short version: The EU is going to task a standardisation body to write a document that tells everyone marketing products and software in the EU how to code securely. This to further the EU Essential Cybersecurity Requirements. For critical software and products, EU notified bodies (which until now have mostly done physical equipment and process certifications) will do audits to determine if code and products adhere to this standard. And if not, there could be huge fines.

Rightmost photo by Wonderlane on Unsplash

Rightmost photo by Wonderlane on Unsplash

Do read on, or head for the Very Short Summary.

The EU Cyber Resilience Act (CRA)

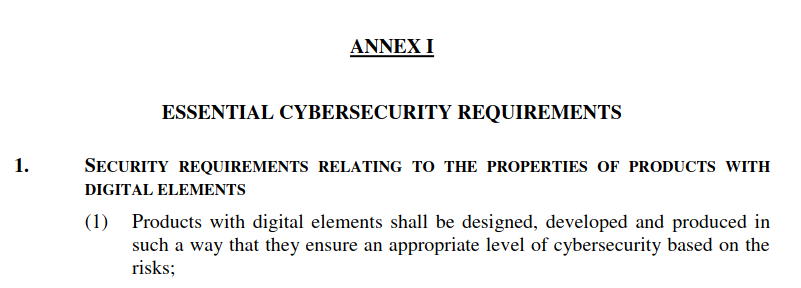

The CRA defines a set of essential cybersecurity requirements that every connected device and almost every piece of software distributed in Europe will have to adhere to:

“The proposed Regulation will apply to all products with digital elements whose intended and reasonably foreseeable use includes a direct or indirect logical or physical data connection to a device or network. (…) a broad scope of tangible and non-tangible products with digital elements, including non-embedded software”

This is basically all software and hardware (although there are some exceptions). Non-adherence comes with Significant Fines (15 million euros or 2.5% of annual turnover, whichever is higher). Some kinds of devices and programs have to adhere to higher standards (by getting third party audits), and these get called out explicitly: operating systems (Linux), microprocessors, boot managers (Grub), network interfaces (Ethernet, WiFi), remote access software (RDP), patch management systems, firewalls, routers, modems, browsers (Chrome, Firefox), network traffic monitoring systems, microcontrollers, public key infrastructure, virtual private networks and more.

Photo by Antoine Schibler via Unsplash

Photo by Antoine Schibler via Unsplash

The act specifically applies to ‘pure software’ as well (even if not part of a device), which is revolutionary. There is a somewhat ambiguous carve-out for open source software, but lots of people in the open source world will still (rightfully) worry that the CRA will apply to them. Specifically, the carve-out is worded with many caveats, which means that if you for example accept a donation from a company for your open source work, you might already become in scope.

It is the universal expectation that Linux will fall under the Cyber Resilience Act, even though it is not clear what Linux is exactly, nor who programs it and therefore who should comply with the act, which includes such fun things as mandatory third party audits by government certified companies.

In this post I try to set out what this new act aims to achieve (pretty good), what requirements it defines (could be improved), what this might mean in practice (very much in the air, could be very very bad) and who will actually have to do the (compliance) work (highly unclear).

This page is aimed at everyone who might be impacted by the CRA, but also at policy makers that are interested in what this act looks like from the world of (open source) software developers. It appears it is the intention to rush the CRA through before the European elections next year, and I think this justifies intense scrutiny of what is going on right now. Since if rushed, the effects of the CRA might be terrible, especially for European innovation.

109 organizations have already provided the European Commission with feedback. I can also recommend this piece by my friends from NLnet Labs. Some more further reading can be found at the end of this post.

Very short summary

- The act will apply to most physical devices with a network connection, and to most software as well, even if not embedded in a device

- Even not too critical software will have to do work for this act, for example to formally determine the “low risk status”, which does not appear to be easy

- There is a caveated carve-out for open source software, but it leaves room for interpretation (when are you part of a ‘commercial activity’?)

- Europe and open source (innovation) have been good for each other. But if you worry about getting a 15 million euro fine, you might decide to no longer do it.

- The CRA describes ’essential cybersecurity requirements’ which are well intended, and mostly ok, but leave a lot of room for interpretation. These requirements tell us to implement adequate denial of service & exploit mitigation techniques, for example, and no one can be certain what that means

- The interpretation will be done by an as yet unspecified European Standards Organisation (likely CEN, CENELEC or ETSI), where further unspecified industry members will turn these requirements into an actual standard. This is a courageous and novel way of using this standardisation process to set mandatory software development standards

- A standard like this has not ever been written, let alone as an official EU harmonised standard!

- As long as there is no completed standard, the CRA will lead to a lot of irritation and anxiety in the broader tech industry. It is not that we won’t worry until the ink is dry.

- Unlike the US National Cybersecurity Strategy, the CRA will impact everyone, and not only “manufacturers and software publishers with market powers” (strategic objective 3.3).

- Almost all software creators and electronic device vendors will at the very least have to self-assess if they adhere to this yet to be written standard, or if they are sure they are low-risk

- If they don’t do this assessment, or are not in compliance, fines of 15 million euros or 2.5% of turnover (whichever is higher) can be levied

- And even if this won’t likely really happen to smaller companies, it says in the text that it could

- Since software is often bundled and/or embedded into devices, anyone marketing a product will have to assure themselves that the components they ship are individually in compliance

- For the most critical products, of which there are many, third-party assessment is required. This is expected to lead to billions of euros of auditing activity, for which there is no capacity yet (and also no standard). Also, there are barely any designated ’notified bodies’ yet that can do such audits

- There are lots of open ends. Who is going to audit Linux? And who is the Linux vendor? If you download a US software product to Europe, are you then an Importer under the terms of the CRA?

- It is not yet clear who specifically the CRA will apply to, and this lack of clarity may mean that particularly (risk averse) US organizations might start to discourage EU entities from using their open source libraries. In turn, US entities might no longer accept contributions from EU based commercially employed programmers, again for fear the CRA will draw them in. This is of specific worry for innovative software products that are not specifically marketed to the EU, but do see great use there

For lots more details and nuance, do read on.

Revolutionary?

I mentioned that applying this kind of regulation to pure (’non-embedded’) software is a revolution. Software is typically not bought. Instead, a user acquires a license to run software. This license often has words like:

Copyright (C) 2022 Free Software Foundation, Inc.

License GPLv3+: GNU GPL version 3 or later <http://gnu.org/licenses/gpl.html>

This is free software: you are free to change and redistribute it.

There is NO WARRANTY, to the extent permitted by law.

If someone wants a guarantee with their software, they’ll have to arrange for that separately. Licenses typically exclude all liability and guarantees explicitly. This has been standing practice for decades.

Now, if you actually buy a (physical) product (say, a toaster or a vacuum cleaner), the store can’t do this. You have all kinds of rights, based on reasonable expectations what a toaster will do. In addition, at least in Europe, there are stringent regulations on how much power a vacuum cleaner is allowed to use, for example. You can’t sell a consumer a non-compliant vacuum cleaner, no matter how many pieces of paper you make them sign saying there is no warranty and no compliance. And it doesn’t matter if you give away the product for free, by the way.

In contrast, software has typically been legislated as ‘information’, stuff users can download, and where the author can be secure that they only provided a piece of information, information which in itself doesn’t “do” anything. As we used to say, if it breaks, you get to keep both parts.

Now, in a real sense, this probably has not been true for a while. If for example I distribute an app that melts your phone, it is unlikely that I’ll be walking away from that saying that all I did was license your use of some information.

As a further example of how the EU has already slowly started to regulate pure code, the Digital Services Directive also sort of applies to software, but with a pretty strong carve-out for open source:

Free and open source software, where the source code is openly shared and users can freely access, use, modify and redistribute the software or modified versions thereof, can contribute to research and innovation in the market for digital content and digital services. In order to avoid imposing obstacles to such market developments, this Directive should also not apply to free and open source software, provided that it is not supplied in exchange for a price and that the consumer’s personal data are exclusively used for improving the security, compatibility or interoperability of the software.

This carve-out is a lot stronger than the one in the CRA which only excludes “open source outside the context of a commercial activity” (recital 10), which leaves a lot of room for interpretation.

So what is in the act?

Firstly, in itself it is a sign of maturity that regulation is starting to apply to networked devices. It is somewhat odd that my kitchen appliances are heavily regulated for safety, but I can buy a network connected camera with a default password that on its own can take out a whole hospital’s communication systems, and that this is all legal (both on the side of the camera and the hospital!).

I understand that it can be frustrating if the government (including the US) starts to take an interest in how you do your work, but given the dreadful state of computer security, we can’t credibly claim things are going well. So, let’s dive in.

Item 1 of the essential security requirements states that cybersecurity is very important, and that products should be secure. I’m sure we all agree with that sentiment. This item also states that the efforts should be appropriate compared to the risks. This is good news for, say, electronic picture frames.

The second item is as brief as it is potent:

Products with digital elements shall be delivered without any known exploitable vulnerabilities;

This is so obvious that it is somewhat weird to finally see it explicitly in writing. The current situation can be compared to that you could bring a car to market while you know the brakes have problems, and that this is legal. Note that the article is careful to specify “known” and “exploitable” vulnerabilities. These are things you can comply with, unlike say a blanket statement that you should not ship with any vulnerabilities. They need to be actually exploitable in your product. At least, I think this is what it means, and it might be good to clarify that.

Update: Several readers have discovered a big problem with this requirement. It effectively means that the moment you report a vulnerability in a product or piece of software, it must immediately be taken offline and off the shelves. This means that no major piece of software could ever ship.

Then the essential security requirements get more specific and itemize the things ‘with digital elements’ should comply with. The first one is actually very good, and one we’ve all been craving:

(3) On the basis of the risk assessment referred to in Article 10(2) and where applicable, products with digital elements shall:

(a) be delivered with a secure by default configuration, including the possibility to reset the product to its original state;

Awww yes! No more ‘admin/admin’ default login! By law! Ready to break out the European Anthem here (10 hours of European anthem).

Item (b) continues to go well, although you can already wonder what this means precisely:

(b) ensure protection from unauthorised access by appropriate control mechanisms, including but not limited to authentication, identity or access management systems;

Several more pretty benign requirements follow, but which could be explained/expanded badly (more about this later):

(c) protect the confidentiality of stored, transmitted or otherwise processed data, personal or other, such as by encrypting relevant data at rest or in transit by state of the art mechanisms;

(d) protect the integrity of stored, transmitted or otherwise processed data, personal or other, commands, programs and configuration against any manipulation or modification not authorised by the user, as well as report on corruptions;

(e) process only data, personal or other, that are adequate, relevant and limited to what is necessary in relation to the intended use of the product (‘minimisation of data’);

But then we hit:

(f) protect the availability of essential functions, including the resilience against and mitigation of denial of service attacks;

Did the EU just require me to make software that can withstand (unspecified) denial of service attacks? Something that is mathematically proven impossible?

(g) minimise their own negative impact on the availability of services provided by other devices or networks;

I could speculate what this means, and I am sure other people will do so as well.

(h) be designed, developed and produced to limit attack surfaces, including external interfaces;

To which I say, nice. However, the ‘zero trust’ community might feel this is not necessary.

(i) be designed, developed and produced to reduce the impact of an incident using appropriate exploitation mitigation mechanisms and techniques;

(j) provide security related information by recording and/or monitoring relevant internal activity, including the access to or modification of data, services or functions;

Well, I’m all in favour of these two sentiments obviously, but I don’t think we’ll ever come to an agreement on what this means. And the eventual standard (more about which later) might get it all wrong.

We end on a high note:

(k) ensure that vulnerabilities can be addressed through security updates, including, where applicable, through automatic updates and the notification of available updates to users.

This could in fact even be worded more strongly. Maybe replace “can” by “will” for example.

Behavioural rules

The requirements so far relate to our software and hardware. But the act also has words on things we should do:

(1) identify and document vulnerabilities and components contained in the product, including by drawing up a software bill of materials in a commonly used and machine-readable format covering at the very least the top-level dependencies of the product;

Round of applause! People might say this is hard, but if you find it too hard to discover just what you are shipping, you should not be shipping.

(2) in relation to the risks posed to the products with digital elements, address and remediate vulnerabilities without delay, including by providing security updates;

Again, cheers, although the “without delay” is already causing worry in some circles. There are legitimate reasons for delay, and some of these reasons are other laws, sometimes in other countries.

(3) apply effective and regular tests and reviews of the security of the product with digital elements;

More about this later, as well-meaning aspirational requirements can turn out to be too weak or Draconian.

(4) once a security update has been made available, publically disclose information about fixed vulnerabilities, including a description of the vulnerabilities, information allowing users to identify the product with digital elements affected, the impacts of the vulnerabilities, their severity and information helping users to remediate the vulnerabilities;

That should spell the end of “various improvements and bug fixes” release notes.

(5) put in place and enforce a policy on coordinated vulnerability disclosure;

🎉

(6) take measures to facilitate the sharing of information about potential vulnerabilities in their product with digital elements as well as in third party components contained in that product, including by providing a contact address for the reporting of the vulnerabilities discovered in the product with digital elements;

🥳

(7) provide for mechanisms to securely distribute updates for products with digital elements to ensure that exploitable vulnerabilities are fixed or mitigated in a timely manner;

(8) ensure that, where security patches or updates are available to address identified security issues, they are disseminated without delay and free of charge, accompanied by advisory messages providing users with the relevant information, including on potential action to be taken.

Again lots of common sense here.

Further requirements

The CRA also has words on vulnerability notifications and reporting, and I hope to come back to those in a later post. Of specific interest, some readings of the text would suggest that vendors help populate a live database of zero-day attacks, to be maintained by the EU. The industry has a well informed opinion on the ability of EU institutions to keep secrets. There are very legitimate worries that reporting all unpatchable vulnerabilities to the EU will likely be a net negative for security.

Setting the standards

You might think that with some effort the essential requirements could be made to work (I do think so). But it turns out the essential requirements are worded too informally to form the basis of a scheme with million or billion euro fines. The European Commission will therefore request as yet unspecified European Standards Organizations (perhaps CEN) to turn the essential requirements into a European Harmonised Standard document that will tell us what ’exploitation mitigation techniques’ are adequate.

This should put a shiver up the spine of everyone who has ever worked on standardisation (I have).

As an industry, we (developers) struggle mightily to come to agreement on even the most basic of things. It might even be said that we probably don’t yet know how to create non-trivial systems that are secure. Despite this, many of us do have pretty strident opinions on how stuff should be done. And this is a toxic mix - there is no established practice we can turn into a code, but there are lots of people with hobby horses.

Interestingly enough, it appears this European standardisation mechanism has up to now only (successfully) been used for things that can be specified in terms of volts, Hertz, digital protocols, procedures or concrete numbers. Here, the Commission appears to be taking the courageous decision to use the standards process in a completely novel way, to specify how developers should program securely.

Now, the European Commission is full of smart people, and they too have realised that this standardisation might not work, and reserves the right to pick their own standards through implementation acts. An interim measure called a CEN Workshop Agreement (CWA) is also a possibility.

As a taste of what this could look like, ETSI standard EN 303 645 describes cybersecurity norms for consumer physical internet of things devices, inspired by the UK’s Product Security and Telecoms Infrastructure Bill.

In terms of what the EU Cyber Resilience Act will mean for everyone, these standards or acts are where the rubber meets the road. These are the things that determine if this act will make our life impossible or if the CRA will be a net positive for cybersecurity.

Who is going to write this standard?

This is not clear. It could be CEN/CENELEC or ETSI. On the 7th of February 2023 these committees held a Cybersecurity Standardisation Conference ‘European Standardization in support of the EU Legislation’ .

ETSI famously antagonised the whole security world by creating its own encryption variant that was considered insecure. Even though I understand where they are coming from, some tensions remain. The precedent from other recent EU acts is not encouraging. Such processes are also not very transparent. To get a seat at the table means being able to show up for meetings for years on end. Permanent vigilance is required because an interested party could change a few words at a late stage and completely upend the meaning of the standard. It has been noted that the European Commission and European Parliament may themselves not excel in transparency and openness, but they are far better in those respects than opaque standards meetings.

I think it would need to be abundantly clear how this standards setting process is going to work. It may even be necessary for the EU to fund participation by players who are usually not in a position to dedicate years of lawyering to such an effort. The commission should require that the chosen standards body makes meeting documents public for both committee and work group level, including lists of members and attendees, both at European level as well as at the national level. Since the EC requests this standards development and also pays for it, this should be very doable. Because as it stands, the standard setting is a democratic void.

I have personally been part of a few standardisation processes, and it is extremely worrying how these are dominated by just a few parties, most of them non-European and with strong commercial goals to get a standard passed that works for them.

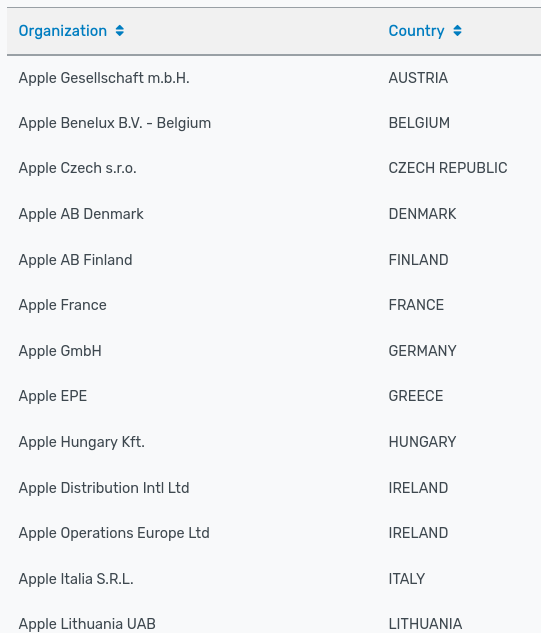

Specifically ETSI is a challenge. CEN/CENELEC have country voting, which means no company can dominate the discussions. ETSI meanwhile has direct company representation, and I was surprised to find that Apple on its own is 21 members of ETSI (!!):

Samsung is another 11 members, Qualcom also 11, Intel 10 and Huawei 7. I’m sure this is all a coincidence and not related to the ability to get more voting power.

A well-tuned standard can be a huge commercial boon to parties with the right technology on hand, technology that is suddenly near mandatory.

19th of March update: A kind reader informed me that the EU had already taken note of the odd position of ETSI, and as of REGULATION (EU) 2022/2480 OF THE EUROPEAN PARLIAMENT AND OF THE COUNCIL, at least some key decisions can only be taken by representatives of national standards organizations, and not (say) by Apple. In addition, the EU can now set requirements for a standard, and also specify a deadline.

Conformity testing

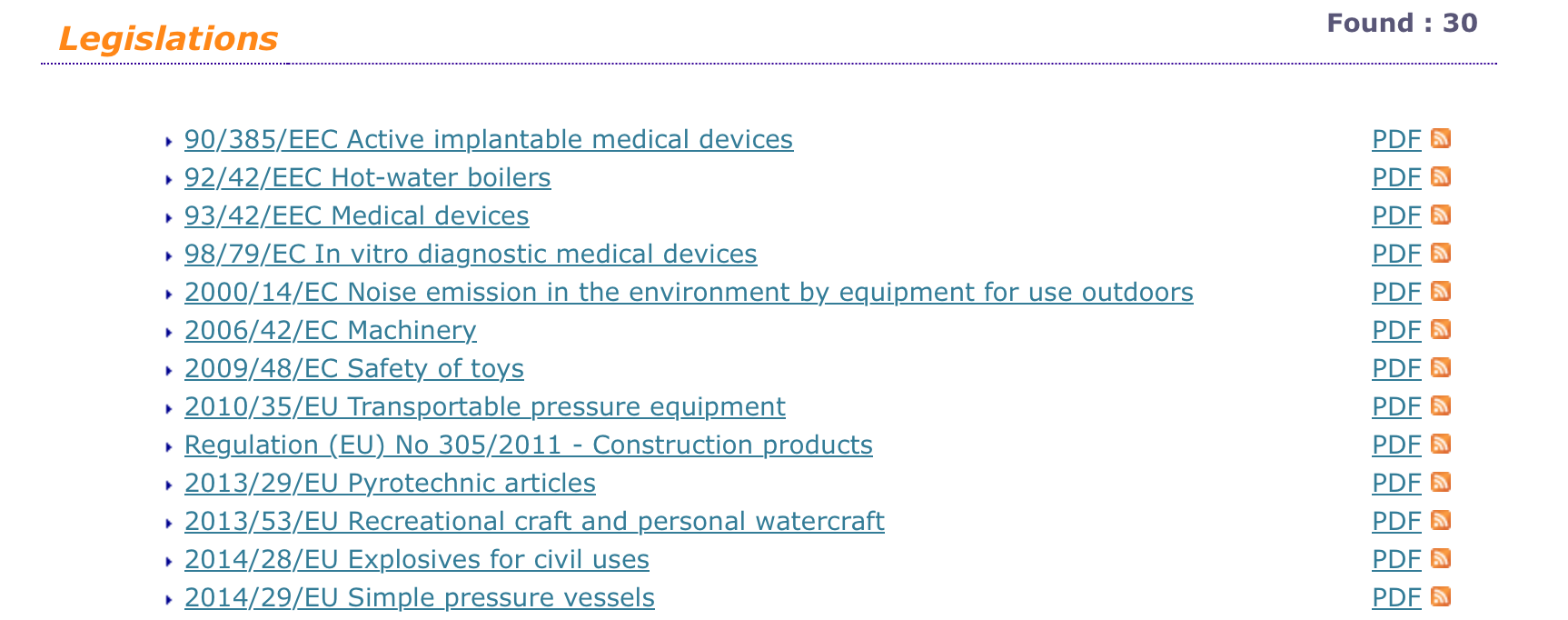

If you suspect you fall under a risk category of the CRA, you need to at the very least self-certify that your products are in conformity with the essential requirements from the act. Or, you need to determine with great certainty if you are low-risk, which is not all that clear. If you are of critical importance, you’ll have to get an external third party to determine this for you. And this isn’t just any third party, it has to be an official ‘notified body’. These can be found on the NANDO site, which currently mostly lists rather industrial sounding categories:

An insider tells me these are pretty solid organizations, but that most of them are not too hot on software, and even where they do touch digital stuff, it is mostly procedural (think ISO 27001). We’ll likely need completely new notified bodies to do anything with the CRA. Of note, ISO 27001 compliance is something completely different from CRA compliance.

Also, even if you aren’t critically important, you still need to do the assessment. It appears that this will likely mean going through the standard mentioned in the previous section. Some informed speculation suggests this might be a 750 page highly detailed document full of rules.

March 14th update: It appears a vendor could also not consult the standard and try to do its own assessment if it is in conformance with the essential cybersecurity requirements. Using the standard however has the benefit that you get the ‘persumption of conformity’.

A point often missed by people advocating regulation is that it will matter for everyone who thinks they might fall under the act. So for example, even if the CRA contains a carve-out for open source, but it is not Rock Solid, that means that most open source products have to take into account that they might fall under it, and get advice on if they do. Or, conversely, open source projects might start refusing donations out of fear they become too commercial.

Specifically, non-European open source projects might very well start to try to dissociate themselves from EU users or contributors, because of worries over potential fines. Crucially, it does not matter if these million euro fines are guaranteed - they only have to be in the realm of possibility for people to worry and take evasive action.

And for my friends in governments and institutions, you may know these huge fines will likely not be levied on small players making a first time mistake. But the small players read the texts, and see 15 million euro fines and multi-interpretable requirements. So they do worry.

So who does it apply to?

The CRA started out as product regulation, and there it is reasonably clear. If someone sells me a networked security camera, the people that marketed that camera are responsible. Often this will be very clear because the box it came in said ‘Hikvision’. The CRA however extends to anyone importing, distributing and selling such devices. You can’t opt out of the CRA because your device was made in Hangzhou, Zhejiang and the EU has no office there.

Now, these days, almost everything you buy contains software from hundreds of different places. Almost inevitably the operating system is Linux. But to perform its functions, the camera also contains web servers, vision and image libraries, mathematical libraries, databases and many many other libraries and components.

The vendor is completely on the hook for all these things, they will have to establish for all of these components if they have known vulnerabilities, for example.

In turn, the vendor can’t just accept that Linux has no security bugs, or that it complies with essential requirement (j) “provide security related information by recording and/or monitoring relevant internal activity, including the access to or modification of data, services or functions”. Someone will have to figure that out, but who?

March 14th update: When embedding third party components, a vendor must do ‘due diligence’ on these components (article 10.4 of the CRA). This appears to be deliberately vague (or flexible if you will) – if a component is not that important, this due diligence could be very light, perhaps check if the software is still being maintained and receiving security updates. If the component however has a security role (like Linux always will), even checking for a CE-mark (indicating CRA compliance) might not be enough in critical cases. For now, there appears to be no guidance on what this due diligence should mean.

Open source authors are already used to enormously irritating emails from employees of billion dollar megacorps demanding compliance documentation. This is the super weird situation where you supply software freely, and the user of that software demands that you do work so they can use your software freely.

The CRA is going to create an absolute bonanza of this stuff.

Now, a tremendously good outcome would be if those worried organizations would not harass open source projects, but would instead fund them to do security work so they can provide that documentation.

But, who do you ask if you want to know if Linux is in compliance with the essential requirements? These are rather official questions. The Linux Foundation might for example be willing to provide a document describing what Linux does for all the essential requirements, in a certain version of Linux, but they are not going to certify anything for you.

Meanwhile, Red Hat (IBM) might be willing to certify such things for you, but only as long as you use exactly their software.

There is also a lack of clarity of who the “importer” of software is when you download source code from (say) GitHub in the US.

In terms of liabilities, it is not clear where those lie. Are the opportunities for that ’exhausted’ (a legal term) when you hit the vendor who marketed the product? Or could the author of an open source security library be exposed to bugs shipped by a vendor of IP cameras?

We really should know, but it appears that we don’t.

Additionally it has been noted that the CRA will cause several billions worth of compliance audits, and that there as yet is no industry ready to actually perform such audits, also because there are no known standards.

So this is very much an open problem.

It may be good to note that the US National Cybersecurity Strategy focuses on players with “market power”, which likely means a lot of smaller companies and organizations will not be impacted.

Impact on innovation and international cooperation

Companies are full of compliance departments, empowered to spot potential regulatory problems. The way these departments work is that they need to be assured there is either no risk, or that they can quantify the risk. If non-EU companies and organizations specifically worry that even free open source software licensed code can come with liabilities, they might well exclude commercially employed Europeans from their projects. Or conversely, they might start warning European users not to use their software, in an attempt to remove the product from the European market.

Update: Theo de Raadt of OpenBSD (a highly secure operating system) and OpenSSH fame has already indicated he might want to recommend EU users not to use OpenSSH for this very reason.

Where is the CRA at?

There is a proposal from the European Commission and the European Parliament has started looking at the act. The Swedish Presidency of the Council of the European Union appears to see the act as a priority, and member states are meeting to discuss the act in the coming weeks.

Given the above text, I think this is an act with admirable aims that should however not be rushed in its current shape. It has been noted that every successful large project has started as a small successful project.

If we are in such a hurry, it might be doable to pass a CRA that is boiled down to its essence (‘secure by default configuration, do not ship known exploitable vulnerabilities, limit attack surfaces, mandatory updates, rapid distribution, notification of vulnerabilities’). It might be possible to standardise these things only a few short years after the act is passed.

It has been noted that if the CRA excluded non-embedded software it would likely at least not impact a lot of academic and open source projects, but it would still have all the other challenges outlined above. The UK is coming up with a non-binding code or practice for software and apps that may be inspirational, meanwhile.

Alternatively, it might also be possible to pass a more comprehensive CRA, but then first spend a lot more time working out scenarios for embedded components and libraries, and who should do the audits, plus to provide better guidance for the standardisers so we have more assurance they won’t go over board.

What can you do?

If you are any kind of player in the software or hardware world, do contact your national ministry tasked with the CRA, and let them know how you think it will work out for you or your industrial sector. The NLnet Labs post has some specific guidance for open source projects.

I can also recommend reading through the proposed act itself. The European Parliament Think Tank also wrote a useful document. If you read these documents and find things that will not work for your industry or sector, please let your ministry know! I can help you find the right place if necessary.

If you are working on the CRA from a member state or the EU and have questions about this page, please do contact me (bert@hubertnet.nl), and I’ll do my best to help.

Finally

I think having a CRA is long overdue, but I hope we can get to something that doesn’t further clamp down on innovation in Europe. European innovation and capabilities are already in a weak enough place. I’m all for getting a cybersecurity Brussels Effect going, but care should be taken.

The US national cybersecurity strategy might serve as inspiration, since it also focuses on actively enhancing cybersecurity capabilities beyond just regulating devices and software.

For for full transparency, I am no one’s lobbyist on this subject, I wrote all of this because I’m a Europe loving but worried software geek with some government and legislative experience.

Further reading

- Center for Data Innovation

- The ultimate list of (open source) reactions to the Cyber Resilience Act

- UK Code of Practice for Consumer IoT security

- The UK Product Security and Telecommunications Infrastructure (PSTI) Act 2022 – What does it cover?

- EU Radio Equipment Directive has run into standardisation problems, very comparable to what might happen to the CRA. The RED also appears to apply to every Raspberry-Pi, by the way.

- The TÜV Association notes that the barriers between low-risk and critical categories are pretty inconsistent, and recommends that a lot more technology become high-risk.